It might be OK now, but for a while there before they hard forked, you set yourself up for issues if you updated majors without being aware of breaking changes.

- 26 Posts

- 580 Comments

Smart TV companies hate this one simple trick!

Pack the TV up in the original box and take it back for a full refund. And tell them why.

This is the Way.

1·18 days ago

1·18 days agoOK, that looks pretty rudimentary. No MQTT that I could find, no clue how or if it does object recog. Not very serious.

1·18 days ago

1·18 days agoI’d forgotten about that one. I’m going to try it again right now. Thank you.

Any pointers on object detection with that?

2·18 days ago

2·18 days agoI’m not super familiar with the integrated one, but I think you can adjust confidence levels in at least one place. That might improve that. Or go back to Deepstack.

2·19 days ago

2·19 days agoTry running a Deepstack container in docker and point the AI feature at that container. It’s much better IME.

1·19 days ago

1·19 days agoEvery year or so I try to go to Frigate from Blue Iris so I can get rid of my last Windows box. But functionally they aren’t in the same league. Just the PTZ controls on Frigate drive me back to BI within minutes, besides all the rest of the features.

Some day…

4·19 days ago

4·19 days agoIf that makes my thunderbird respect the startup position, I’ll be so happy…

The code is the comment anyway. The only thing you should comment is things that are way the hell out in left field about why that bit of code exists when it shouldn’t.

4·25 days ago

4·25 days agoProbably use Gemma4 if your machine has the chops for it.

Rspamd seems to be common, it’s included in the mailcow stack and others. Seems to work pretty good, I’ve been on Mailcow for several years now with no major spam issues after I dialed it up a bit.

The term you would search for here is “split-horizon DNS”. Assuming you’re using a real domain name with hosts, you want a DNS server inside that resolves the LAN address, and the outside DNS server for everyone else resolves your WAN address (which presumably you reverse-proxy to inside host).

Even better is to not expose the service at all from the outside, use a VPN like Tailscale, and then use their MagicDNS service on the tailscale network to keep everything behind the firewall.

Every service you expose to the outside is more attack surface.

6·28 days ago

6·28 days agoI had a car dealership I was to add new servers into a new rack and recable it. I walked into a room with about half a dozen servers balanced on a pile of cat5, BNC and serial cables about 4’ high. I spent 3 weeks untangling cables, removing dead cable, decommissioning serial and token ring networks and re-terminating or re-running ethernet that didn’t test well.

Pretty much everything was done by scream test because nothing was marked. I found an ancient server that was still used for manuals occasionally that was drywalled into a old closet in the shop when I traced down a line I disconnected and one of the mechanics asked where his manuals had gotten to. That server was shut down every night when they turned off the shop lights and booted back up every morning for who knows how many years when someone came in to work and turned on the lights.

I eventually got to the point I could set up my rack and SANs/servers, patch everything over from the network rack I mounted on the wall, and get guys going on the workstations.

We had a series of meetings after that with the sales team about getting a technical appraisal before we sold our equipment into dealerships. And every dealership I worked in after that was pretty similiar.

Honestly, it was an amazingly satisfying feeling at the end to look in that room after I was done. I get a little shiver 20 years later thinking about it now.

2·1 month ago

2·1 month agoYah, you’ll want a thick skin around this group and don’t get herded into groupthink, but you seem to have that covered already.

There are places to look for help if you need it (I’m happy to take DMs) but unfortunately I’d have to recommend reddit/r/linuxquestions as probably the most welcoming. While the ArchWiki is a great source of information, stay far away from the Arch forums unless you’re wearing blast armor. Format a question the wrong way and you’ll get fragged. I’d say the Debian forums are probably the easiest to get along with, it seems populated with adults.

3·1 month ago

3·1 month agoFinally, someone that actually RTFA.

The systemd fiasco really tore the heart out of my respect for the Linux community, especially the one right here. One asshole out there pretty much doxxing an opensource developer for putting a field in the same PII area as address and phone number, and the rest of the assholes were looking to crucify the guy instead of shaming the doxxer for it. And nobody (well, few people) saw what was wrong with that.

And then, yes, the init wars, and shitting on devs for their choices about packaging, and shitting on them for not liking how they run their project, for using AI, and and and.

The downvotes on this comment garnered tells me it hit a nerve, and that’s why I posted it. I don’t expect anyone to actually take it to heart and change, but when I was pushing back here against the pitchfork mob over the age verification thing, I hoped for a better response. Typical, as the linux community likes to shoot the messenger.

In the end, driving people away from desktop Linux works in our favor, because it puts off the day that Linux enshittifies. So I can’t be too upset.

31·1 month ago

31·1 month agoI thought it was quite eloquent. The downvotes tell me it hit a nerve, and as another commenter says, the recent “field added in systemd so let’s hang someone” fiasco lines up.

1·1 month ago

1·1 month agoI’d be happy with an electric chore tractor the size of a 7230 or 6R.

I can’t see electric combines any time soon. The sheer amount of power they go through would make that untenable.

21·1 month ago

21·1 month agoYou would figure that most developers would just work on the platform that gives them the tools to do what they do most easily. Yet the amount that won’t use Linux and instead seemingly cripple themselves by developing on Mac or Windows frankly astounds me. But I’m also very used to Linux’ pain points, having used it since the 90s. If I only every got my software with an .exe or .dmg download, I’d probably shy away as well.

I couldn’t imagine working with Docker Desktop or WSL, or depending on Brew to have everything I need, but that’s the reality for many, because it’s familiar and simple. The underlying operating system is abstracted away so they don’t have to deal with it, and sticking their nose in Linux is scary and confusing, especially if you’re expected to deal with the panoply of installation methods available to your software. When I dealt with Windows in the long-long ago, you built a MSinstaller package and went on your merry way.

And if you look at new developers today, do you think they’d put up with RBBS for a minute if that’s how they got their software out?

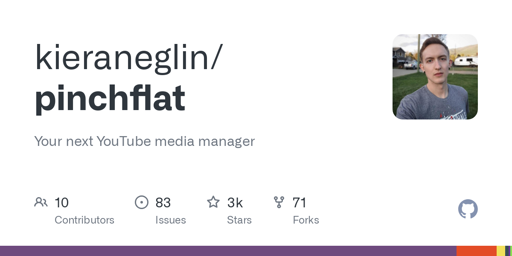

That is getting pretty far behind unless you’re leaving yourself open to go back to Gitea. I think 12 was the hard fork.