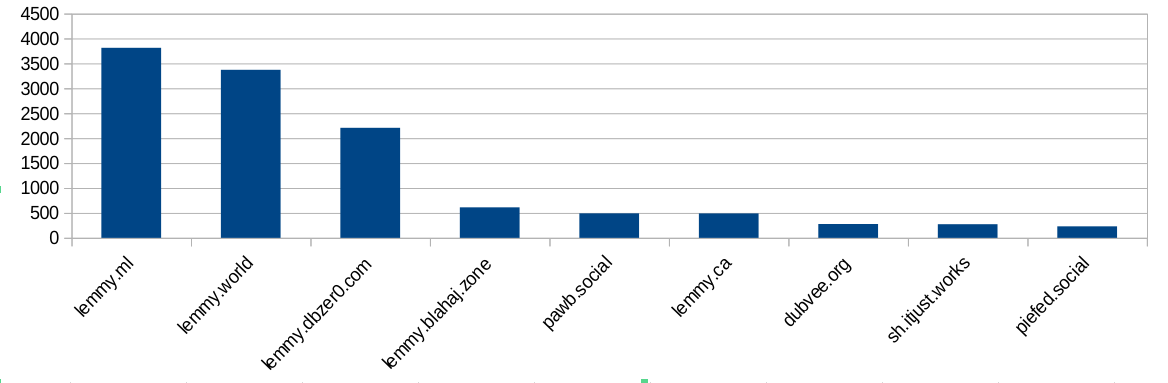

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

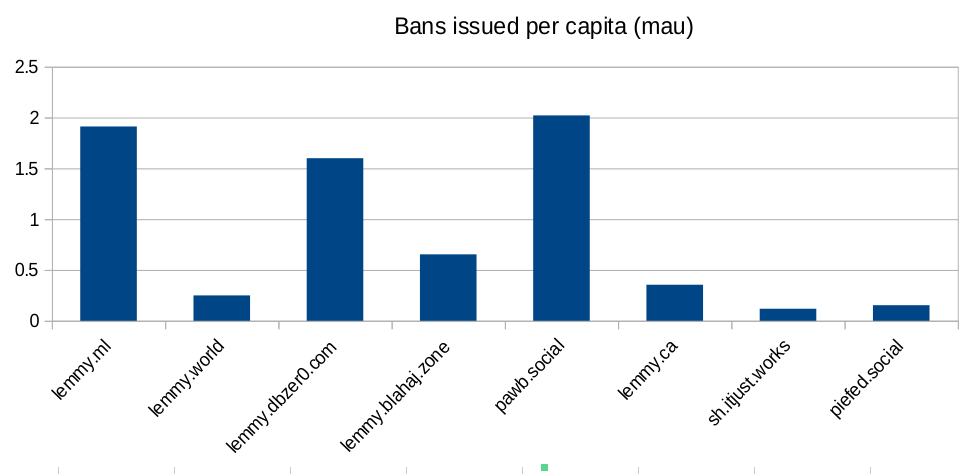

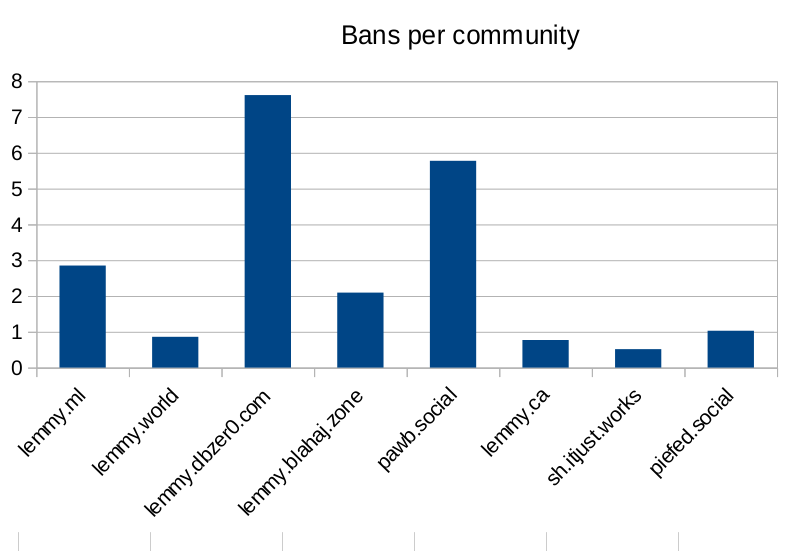

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

Yes, generating images with AI is in their instance description. They think computers doing our art for us is “anarchist”.

They are not completely wrong though. It’s a ceter piece of anarcho-capitalism.

Aren’t you that person who thinks AI is “enslavement”?

They also think AI are not compatible with veganism.

Both of those positions are reasonable and tame compared to the majority of Their beliefs.

I don’t think ChatGPT is smart enough to offer meaningful consent to work for humans. It’s got the intelligence of a 13 year old at best. And we don’t understand where consciousness comes from in humans, so assuming ChatGPT is a p-zombie is an ethical risk I don’t think we should be taking.

It doesn’t have intelligence at all. It can’t think. It can’t have consciousness. That’s not how any of this works. It’s just fancy next word prediction. You seem to have a genuine misunderstanding of the technology at a fundamental level.

You’re wrong, there is a risk that it may experience qualia.

It’s not capable of experiencing anything. Everything we’re doing with ai and LLMs is no where remotely near genuine intelligence or an AGI or accounting like that. Everything we have right now is nothing more than fancy autocomplete, and it’s not even particularly great at that in the first place. You have fundamental misunderstandings of the technology to cartoonish degree.

You’re wrong, you don’t know how the human brain produces subjective sensation.

Yeah we do. With neurons and electrochemical signals. Seriously bro go get you some basic education.

You’re seriously saying you’ve solved the hard problem of consciousness, which has stumped philosophers and neuroscientists for thousands of years? You know how the brain creates consciousness?

Well then where’s your nobel prize, Einstein?

LLMs don’t have continuous processes, there’s quite literally nothing there that could even feasibly be conscious. It takes a bunch of text as an input, puts it through a whole lot of predetermined calculations, then outputs text or an image or whatever.

There’s no emotions, no memory, no learning. If you don’t tell it something, it’s inert. It can’t experience suffering because it can’t experience anything. It’s an algorithm. It has the same claim to consciousness that WinRAR does. There’s a zero percent risk it experiences anything, let alone suffering.

Honestly, a desktop running Windows or Linux for example imo has a stronger claim to consciousness than ChatGPT does. Or maybe a Mii in Tomodachi Life, those seem to be able to become “sad”.

The environmental impact of AI is a much better ‘vegan’ reason not to use it. Although by not using it, you may in effect be “killing” it…

Do you have proof that continuity is a necessary component of qualia? I would have thought the opposite, since I experience a big break in the continuity of My experience every night when I go to sleep. I’m concerned that there’s a risk continuity may not be necessary, in which case using genAI to serve humans poses a serious ethical problem in addition to the pollution, child abuse, and cognitive damage.

Who says qualia are required for consciousness? Why isn’t your smartphone conscious? Or a desktop PC? We’ve had chatbots for ages, those were never considered conscious by anyone. What is it about LLMs specifically that suggests consciousness to you?

Also calling people OpenAI stooges for arguing LLMs aren’t conscious is a bit odd, given that OpenAI heavily marketed ChatGPT as being “so smart” it might be conscious. To them it’s a selling point, not an ethical roadblock.

But even ignoring the zero% chance that LLMs are conscious, there’s also the additional hurdle of assuming that LLMs can indeed “suffer” (whatever that might mean to an algorithm) and that LLMs indeed suffer from serving humans. Plus the whole “if it doesn’t serve a human, it’s existence essentially ceases to be”-issue with your argument, which arguably would be even less ethical.

That’s not how sleeping works either, since you (presumably) have unconscious processes that never stop or does your brain heart and organs shut down for you during sleep? You need to go to school my man, you seem to have a curious nature but wow you have no real understanding of how any of the stuff you’re talking about actually works. Learn first, then form opinions.

So you’re arguing that continuity is required for consciousness, because unconscious sleeping people have continuity of consciousness. Are you a troll?

@Grail @alzjim

Always funny to me how most people who are strongly claiming AI is/might be conscious are also strong AI users/involved in its development. If there’s consciousness there, you would think making AI your personal slave and constantly reshaping and remodelling it as you see fit would be kinda problematic, but these people always seem to want to have it both ways.

Yeah, and the anti AI people mostly say it’s a p-zombie and there’s nothing wrong with using it for sex. It’s weird and backwards.

I’m all about being cautious. I don’t want to make a mistake we can’t take back. If we normalise using AI and then it turns out to be capable of suffering, people will be stubborn about giving it up.

I get the feeling that research is circling around consciousness arising from quantum effects inside nerve cells. If it’s not that, and it’s just an emergent property of complex neural networks, then:

Four year old humans are definitely conscious. I used to be four, and I can remember being conscious. If we build a mechanical four year old, I don’t see any reason that thing is going to take over the world. Unless it turns out like Calvin.